Anthropic’s new AI agent, Cowork, is expected to have a broader market impact than the company’s earlier flagship product, Claude Code.

Anthropic, a leading player in artificial intelligence innovation, is preparing to introduce its latest general-purpose AI agent, Cowork. According to a senior executive at Anthropic PBC, Cowork is anticipated to reach a significantly wider audience than Claude Code, the startup’s breakthrough product that helped establish its reputation in the AI sector.

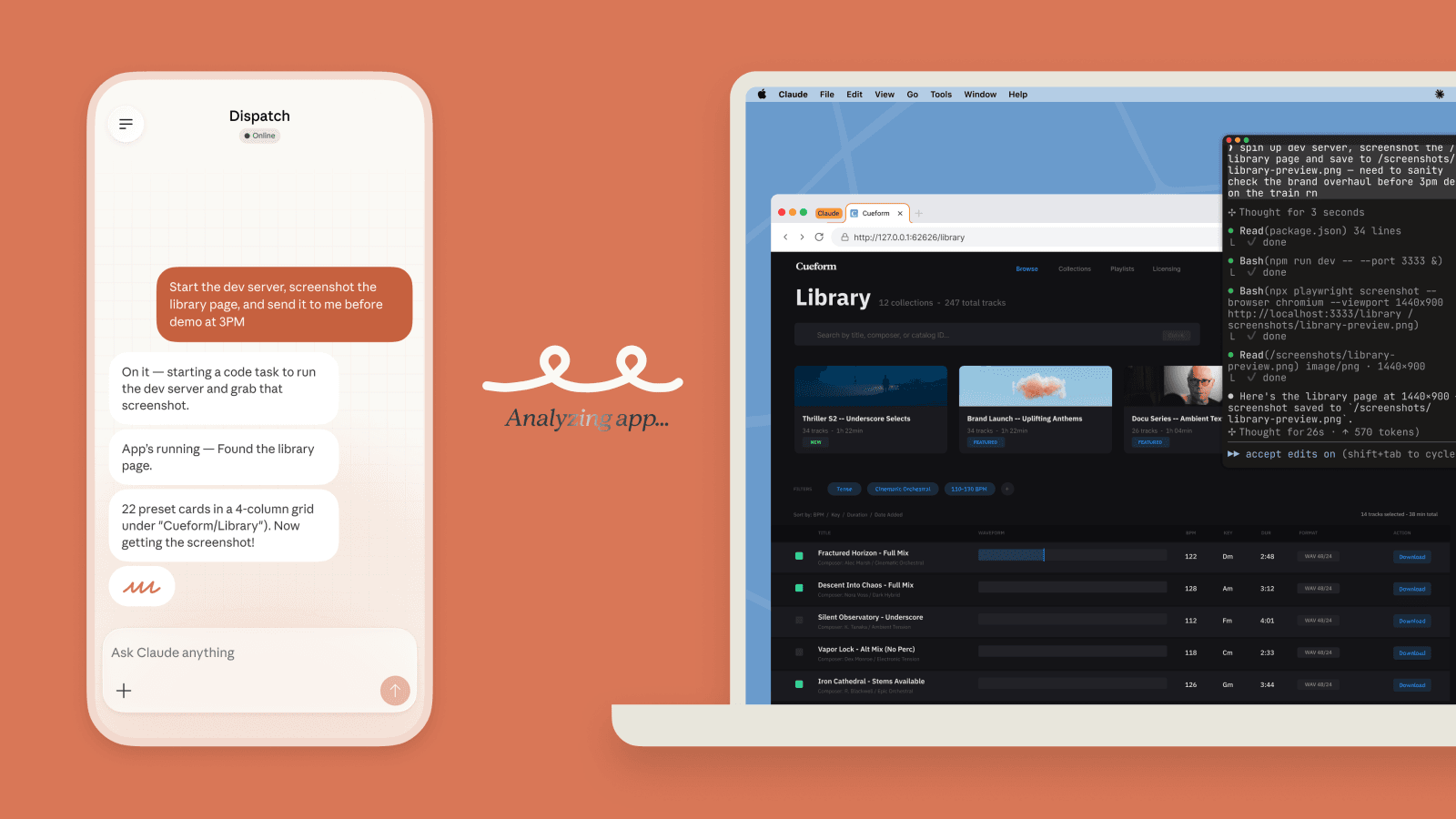

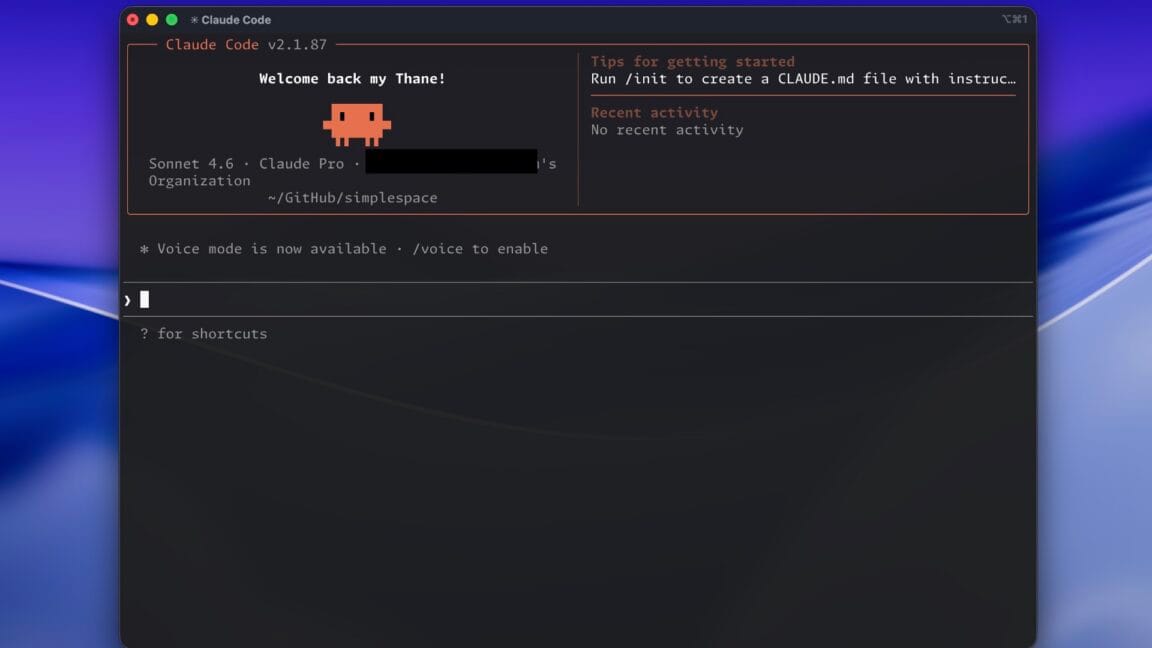

Claude Code, known for its desktop automation and interactive workflow capabilities, has been a key driver in Anthropic’s rise as an AI powerhouse. It enabled users to streamline complex tasks, enhancing productivity through advanced automation features. However, as demand for more versatile AI tools grows, Anthropic is betting that Cowork’s general-purpose approach will open new avenues for adoption beyond the existing user base.

Cowork is designed to function as a collaborative AI assistant capable of integrating into various business environments and workflows. This flexibility positions it as a potential game-changer for executives and operators seeking automation solutions that adapt to diverse operational needs. Unlike Claude Code’s focus on code-based automation, Cowork aims to offer a broader suite of interaction modes, facilitating smoother human-machine collaboration.

The implications for businesses are substantial. As automation continues to be a critical driver of operational efficiency, tools like Cowork could enable companies to accelerate digital transformation initiatives without the steep learning curves often associated with AI adoption. This development aligns with trends seen in platforms such as Polymarket and OpenClaw, which also emphasize automation and user-friendly AI integration.

For CEOs and founders monitoring the AI landscape, Anthropic’s shift highlights the evolving nature of AI products moving from specialized tools to more versatile agents. This evolution suggests that future AI solutions will prioritize adaptability and ease of use, making them accessible to a wider range of business applications. It also signals increased competition among AI providers to deliver solutions that not only automate but also enhance decision-making and collaboration.

While Claude Code remains an important part of Anthropic’s portfolio, the company’s executive outlook positions Cowork as a pivotal innovation with the potential to reshape the market. As businesses explore how to leverage AI for strategic advantage, the arrival of Cowork could mark a turning point in how AI agents are deployed across industries.

Anthropic’s approach reflects a broader trend in the AI sector toward creating general-purpose agents capable of seamless integration. This trend is likely to influence how companies like Polymarket and OpenClaw develop their own offerings, emphasizing automation that is both powerful and accessible. For executives, staying informed about these developments will be key to identifying opportunities to harness AI effectively.

As Cowork moves closer to launch, attention will focus on its ability to deliver on promises of flexibility and broad applicability. The coming months will be critical to understanding how this new agent complements existing AI tools like Claude and how it fits into the larger automation ecosystem shaping the future of work.

The introduction of Cowork reflects Anthropic’s strategic pivot towards more inclusive AI solutions that cater to a broader spectrum of business needs. While Claude Code specialized in automating coding tasks and workflows primarily for technical users, Cowork’s design emphasizes versatility, enabling integration across multiple departments and functions. This approach aligns with growing enterprise demands for AI systems that not only automate routine processes but also enhance collaboration and decision-making across teams. For executives, this means AI tools are evolving from niche applications into foundational components of digital business transformation.

In parallel with developments at Anthropic, companies like Polymarket and OpenClaw are also advancing automation technologies aimed at streamlining operations and improving user engagement. Polymarket’s focus on market-based forecasting and OpenClaw’s emphasis on seamless AI integration further illustrate a competitive environment where adaptability and ease of use are becoming critical differentiators. For business leaders, understanding how these platforms complement or compete with Anthropic’s offerings will be important in shaping technology strategies that leverage AI’s full potential.

Looking ahead, the success of Cowork could signal a broader industry trend where AI moves beyond specialized, code-centric tools into more generalized agents capable of handling diverse workflows. This shift could lower barriers to AI adoption, enabling companies of varying sizes and sectors to realize efficiency gains without requiring extensive in-house technical expertise. As automation increasingly influences operational models, executives should monitor how these evolving AI solutions impact workforce dynamics, investment priorities, and competitive positioning in their respective markets.

Related reading: Claude Code CLI Source Code Leak Raises Concerns for Anthropic and Industry and Anthropic Faces Pricing and Usage Challenges with Claude Code Limits.